In recent years, the rapid advancements in machine learning (ML) and artificial intelligence (AI) have transformed various industries, enabling data-driven decision-making and automation of complex tasks. However, as ML models become increasingly integral to businesses, the need for efficiently managing and deploying these models has given rise to a new discipline known as ML Ops (Machine Learning Operations). ML Ops aims to streamline the end-to-end ML lifecycle, from data preparation and model training to deployment and monitoring, ensuring that ML projects are not only developed rapidly but also maintained and improved over time.

Understanding the ML Lifecycle

The machine learning lifecycle encompasses a series of interconnected stages, each crucial to the success of an ML project. These stages include:

Data Collection and Preparation: ML projects begin with collecting and preparing relevant data. Data quality, diversity, and accuracy play a critical role in the success of the model. This stage involves data cleaning, preprocessing, and transformation to make it suitable for training.

Model Development: During this stage, data scientists and machine learning engineers develop and experiment with various models. This includes selecting appropriate algorithms, tuning hyperparameters, and training models on labeled data.

Model Evaluation: Once models are trained, they need to be evaluated to determine their performance on unseen data. This involves using metrics such as accuracy, precision, recall, and F1-score to assess the model’s effectiveness.

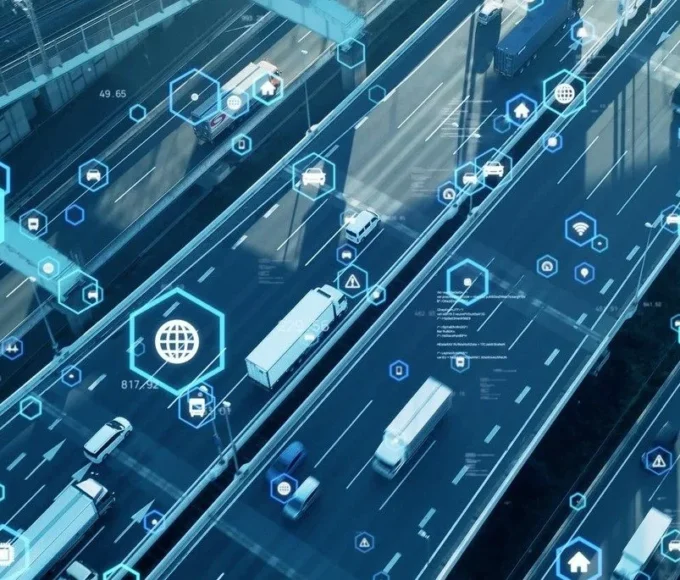

Deployment: Deploying ML models into production is a significant challenge. It involves integrating the model into the existing software infrastructure, ensuring scalability, reliability, and security. The deployed model must handle real-world data and provide predictions in real-time.

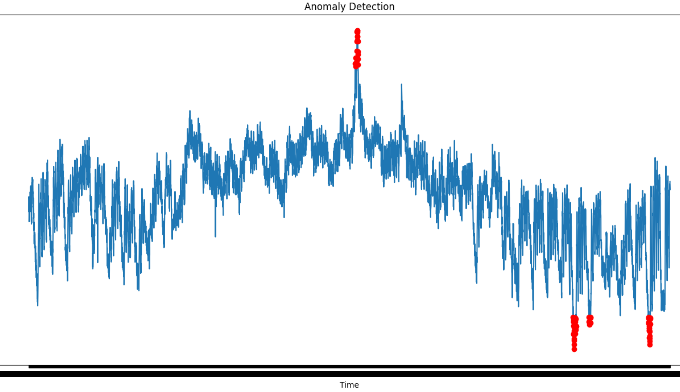

Monitoring and Maintenance: After deployment, continuous monitoring is essential to track the model’s performance and detect any anomalies. Models might degrade over time due to changing data distributions or other factors, necessitating regular updates and retraining.

Iterative Improvement: ML models are not static; they require constant improvement. This stage involves analyzing feedback, identifying areas of improvement, and incorporating new data to enhance the model’s accuracy and effectiveness.

The Role of ML Ops

ML Ops emerged from the need to manage the complexities of deploying and maintaining ML models in real-world environments. It draws inspiration from DevOps practices, which focus on automating and optimizing software development and deployment. ML Ops aims to:

Automate Processes: Automation is at the heart of ML Ops. It involves automating tasks like data preprocessing, model training, and deployment, reducing manual errors and speeding up the development cycle.

Version Control: Just as in software development, version control is crucial in ML to track changes to data, code, and models over time. This ensures reproducibility and facilitates collaboration among teams.

Containerization: ML Ops often relies on containerization technologies like Docker to package models and their dependencies into isolated environments. This makes it easier to deploy models consistently across different platforms.

Continuous Integration and Continuous Deployment (CI/CD): CI/CD pipelines automate the process of integrating code changes, testing them, and deploying them to production. This approach enables rapid and reliable model deployment.

Monitoring and Feedback: ML Ops emphasizes the importance of continuous monitoring of deployed models. Monitoring helps detect issues in real-time, such as degraded performance or anomalies, and triggers actions for maintenance or updates.

Collaboration: ML Ops encourages collaboration between data scientists, machine learning engineers, and IT operations teams. Clear communication and collaboration facilitate the smooth flow of ML projects from development to production.

Benefits of ML Ops

Implementing ML Ops practices offers several benefits:

Faster Time-to-Market: Automation and streamlined processes reduce the time required to develop and deploy ML models, enabling businesses to respond quickly to changing market needs.

Enhanced Collaboration: ML Ops promotes collaboration between cross-functional teams, breaking down silos and fostering a shared understanding of project goals.

Improved Model Performance: Continuous monitoring and iterative improvement ensure that deployed models remain effective and accurate over time.

Reduced Risk: Automation and monitoring help identify and mitigate issues early, reducing the risk of faulty models causing disruptions in production systems.

Scalability: ML Ops practices facilitate the deployment of models at scale, accommodating increased workloads and demand.

Challenges and Future Directions

While ML Ops offers numerous advantages, it also comes with challenges. Managing the complexity of different tools, maintaining version control for data and models, and ensuring data privacy and security remain ongoing concerns. Additionally, the rapidly evolving landscape of machine learning tools and frameworks requires constant adaptation.

Looking ahead, the ML Ops field is likely to continue evolving. Integrations with cloud services, the development of specialized ML Ops platforms, and the incorporation of AI-driven automation are trends to watch. As more businesses recognize the value of streamlined ML lifecycles, the adoption of ML Ops practices will likely become a standard in the industry.

ML Ops has emerged as a critical discipline in the realm of machine learning, addressing the challenges associated with developing, deploying, and maintaining ML models. By integrating automation, collaboration, and monitoring, ML Ops streamlines the end-to-end machine learning lifecycle, enabling businesses to harness the power of AI and drive innovation with greater efficiency and reliability.

As technology continues to advance, ML Ops will play a pivotal role in shaping the future of machine learning.

Leave a comment